Why Your Next Model Won't Run in the Cloud

For a decade, "AI" was synonymous with massive GPU clusters in data centers. In 2026, the pendulum is swinging back to the edge. The reason? Physics and Privacy.

The Latency Cliff

Real-time applications simply cannot afford the round-trip time (RTT) to a data center.

- Autonomous Vehicles: A 200ms latency spike can mean the difference between braking and a collision.

- AR/VR: The "motion-to-photon" latency must be under 20ms to prevent nausea. Cloud rendering is too slow.

Generative AI on the Edge

The breakthrough of 2026 is High-Quality Quantization.

- 4-bit Quantization (GGUF/AWQ): We can now run Llama-4-8B models on a standard iPhone 17 or Pixel 11 with negligible perplexity loss.

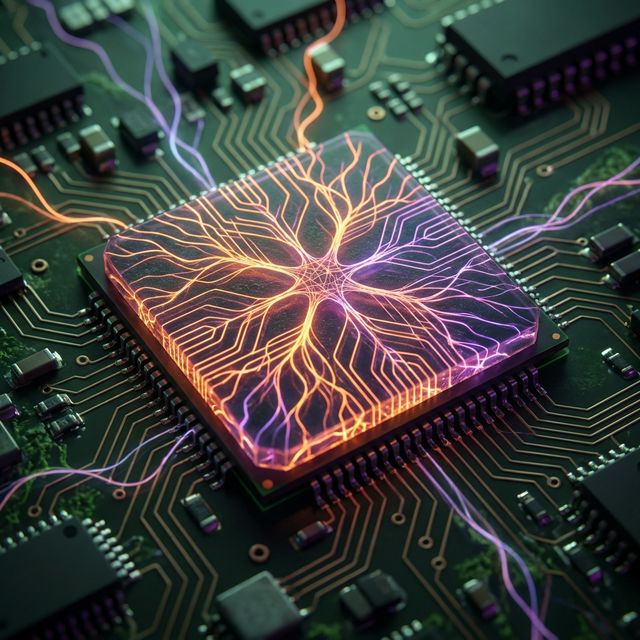

- NPU Acceleration: Mobile chips (Apple A19, Snapdragon 8 Gen 5) now have dedicated Neural Processing Units (NPUs) capable of 50+ TOPS (Trillion Operations Per Second), specifically designed for matrix multiplication.

Privacy by Design

Users are increasingly wary of sending personal data to the cloud.

- On-Device RAG: Your phone can index your emails, messages, and photos locally. An LLM running on your phone can answer "When is my meeting with John?" without that data ever leaving your device.

- Health Monitoring: Smartwatches process arrhythmia detection locally, ensuring health data remains private.

The Hybrid Future

We aren't abandoning the cloud. We are moving to a Tiered Architecture:

- Tier 1 (Device): Instant, private inference for 80% of tasks (summarization, autocomplete).

- Tier 2 (Edge Node): Local 5G tower for heavier processing.

- Tier 3 (Cloud): Massive reasoning models (like GPT-6) for complex, non-time-sensitive queries.

We are currently benchmarking Edge NPU performance across major mobile chipsets.