Best AI Model Comparison Tools for Developers in 2026

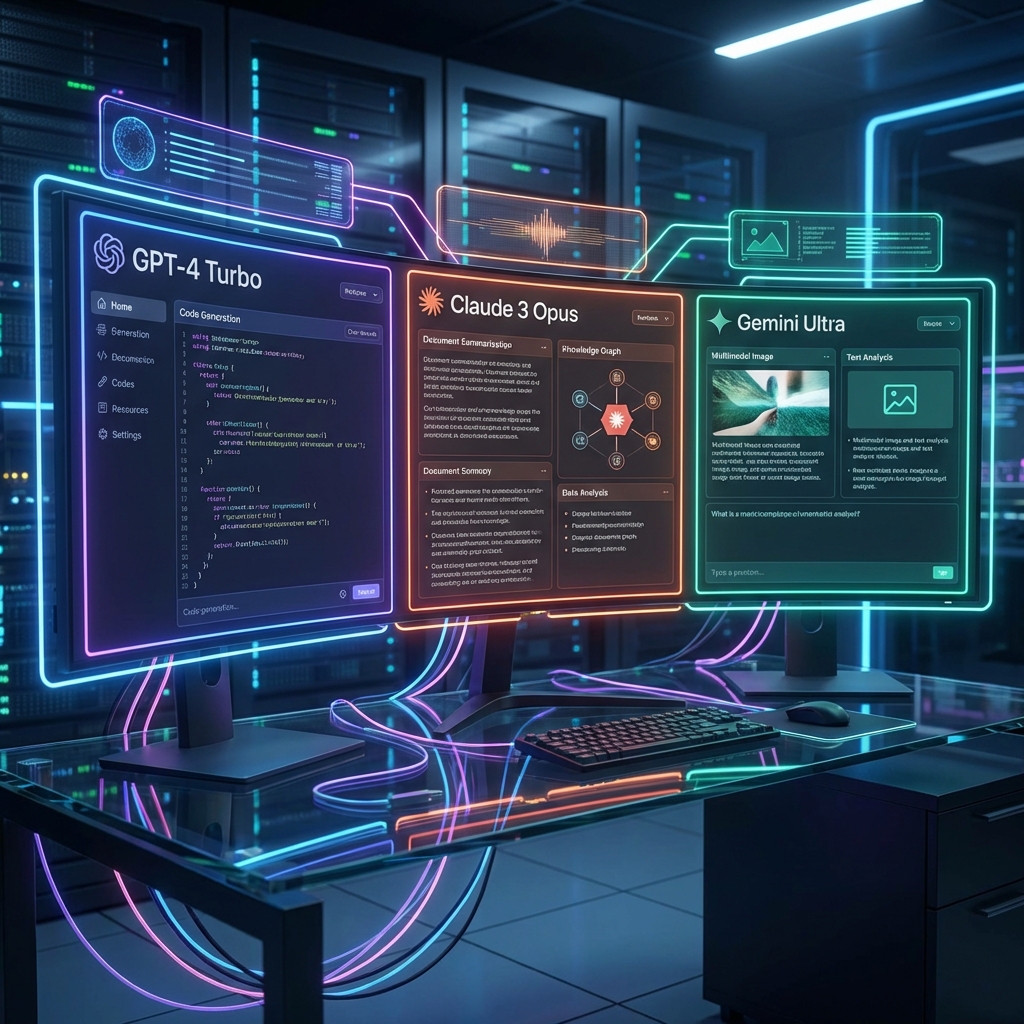

In 2026, the number of available Large Language Models (LLMs) has exploded. Developers are no longer just choosing between GPT-4 and Claude 3.5; they are navigating a complex ecosystem of specialized models, open-source weights, and varying cost structures.

Choosing the wrong model can cost your startup thousands in API fees or result in poor user experiences. This is where AI model comparison tools become essential.

Why Manual Testing Is Dead

Historically, developers would open multiple browser tabs, copy-paste the same prompt, and eyeball the results. This "vibe check" methodology is:

- Unscientific: It lacks objective metrics.

- Slow: It doesn't scale to hundreds of prompts.

- Costly: It wastes engineering time.

Top Features to Look For in a Benchmarking Tool

When selecting a tool to test your AI stack, prioritize these features:

1. Simultaneous Execution

You need to run the same prompt across multiple models (e.g., GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro) at the exact same time to compare latency and output quality directly.

2. Cost Estimators

Token pricing varies wildly. A good tool will calculate the cost of your prompt and the generated response in real-time.

3. Parameter Tuning

The ability to adjust temperature, top_p, and max_tokens across all models simultaneously is crucial for finding the "sweet spot" for your application.

The Solution: AI Playground

AI Playground by Neon Innovation Lab is designed specifically for this workflow. It aggregates over 40 top-tier and open-source models into a single, unified interface.

[!TIP] Use AI Playground's Batch Mode to test your system prompts against 50+ user inputs at once.

With AI Playground, you can:

- Compare Outputs Side-by-Side: Visual diffing for text generation.

- Track Latency: See exactly which model is slowing down your chain.

- Analyze Costs: Get a breakdown of spend per 1k tokens.

Conclusion

Don't build in the dark. Use data-driven comparisons to select the engine that powers your product.