I Tested Multiple AI Models Side-by-Side — Here’s Why One Interface Matters

In 2026, the AI landscape is more fragmented than ever. With dozens of models from OpenAI, Anthropic, Google, and Meta, choosing the right one for your specific task can be a nightmare of browser tabs and copy-pasting.

That's why I built AI Playground.

The Benchmarking Headache

When you're building an AI-powered app, you need to know:

- Which model gives the most accurate response?

- What is the latency difference?

- Which model is the most cost-effective?

Doing this manually is slow and error-prone.

Enter AI Playground: Your Unified Dashboard

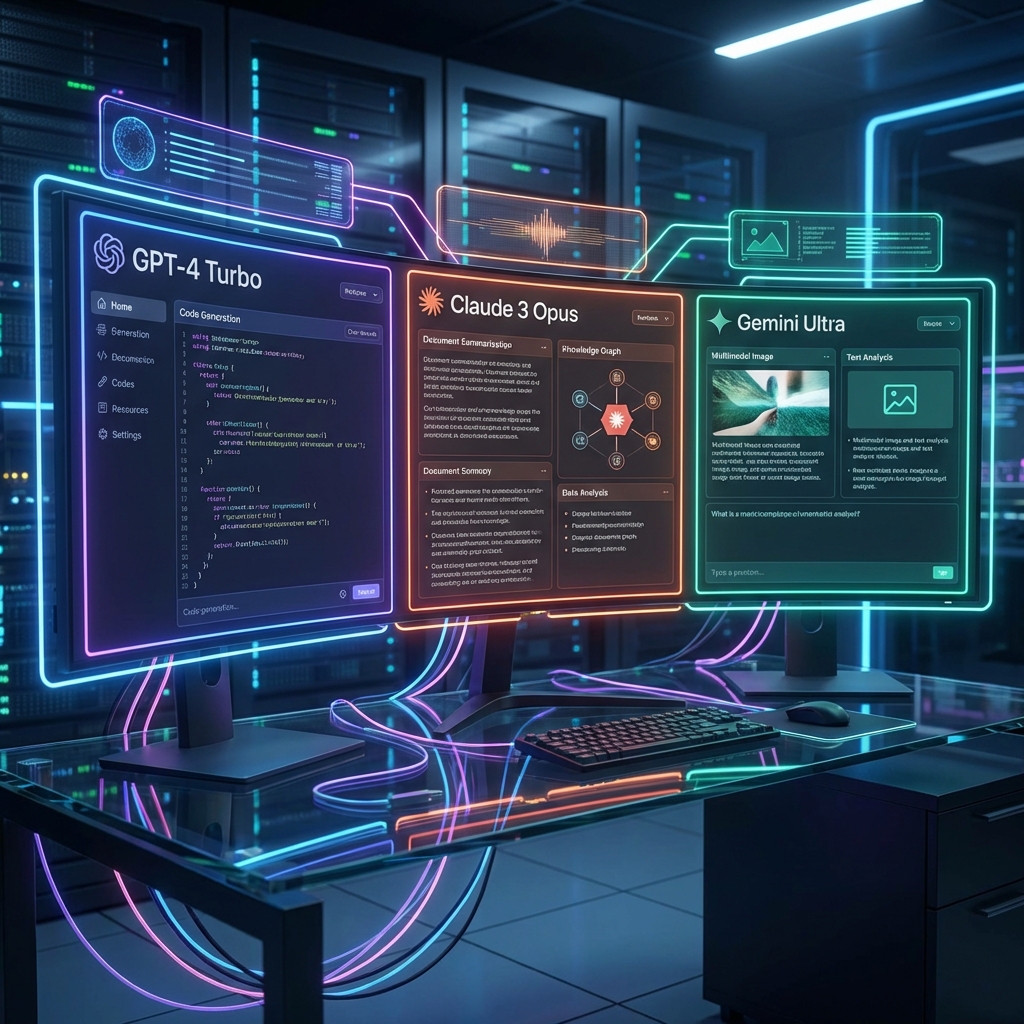

AI Playground brings over 40 models into a single, cohesive interface.

📊 Side-by-Side Comparison

Compare outputs from GPT-4o, Claude 3.5, and Gemini 1.5 Pro simultaneously. See the differences in tone, reasoning, and accuracy instantly.

⏱️ Performance Metrics

Real-time tracking of tokens per second and cost per 1M tokens. Make data-driven decisions about your AI stack.

🛠️ Developer-First Features

- Batch Processing: Test your prompts against hundreds of inputs at once.

- Exportable Results: Share your findings with your team in CSV or JSON.

Why One Interface Wins

Consolidating your AI experimentation into one playground saves hours of R&D time. It allows for rapid prototyping and ensures you're always using the best tool for the job.