A/B Testing AI Models: A Complete Guide for Product Engineers

In traditional software development, A/B testing involves changing a button color or a headline. In AI engineering, A/B testing involves changing the brain of your application.

Integrating an LLM without testing is like launching a rocket without checking the weather. You might get lucky, but you'll probably crash.

What Variables Should You Test?

When optimizing an AI feature, you have three main levers:

- The Model: e.g., GPT-4o vs. Llama 3.

- The Prompt: The system instructions given to the model.

- The Hyperparameters: Temperature, Top-P, Frequency Penalty.

The A/B Testing Workflow

Step 1: Define Your "Golden Set"

Create a dataset of 50-100 inputs that represent real user queries. You need a mix of:

- Simple queries

- Edge cases

- Adversarial attacks

Step 2: Run Batch Inferences

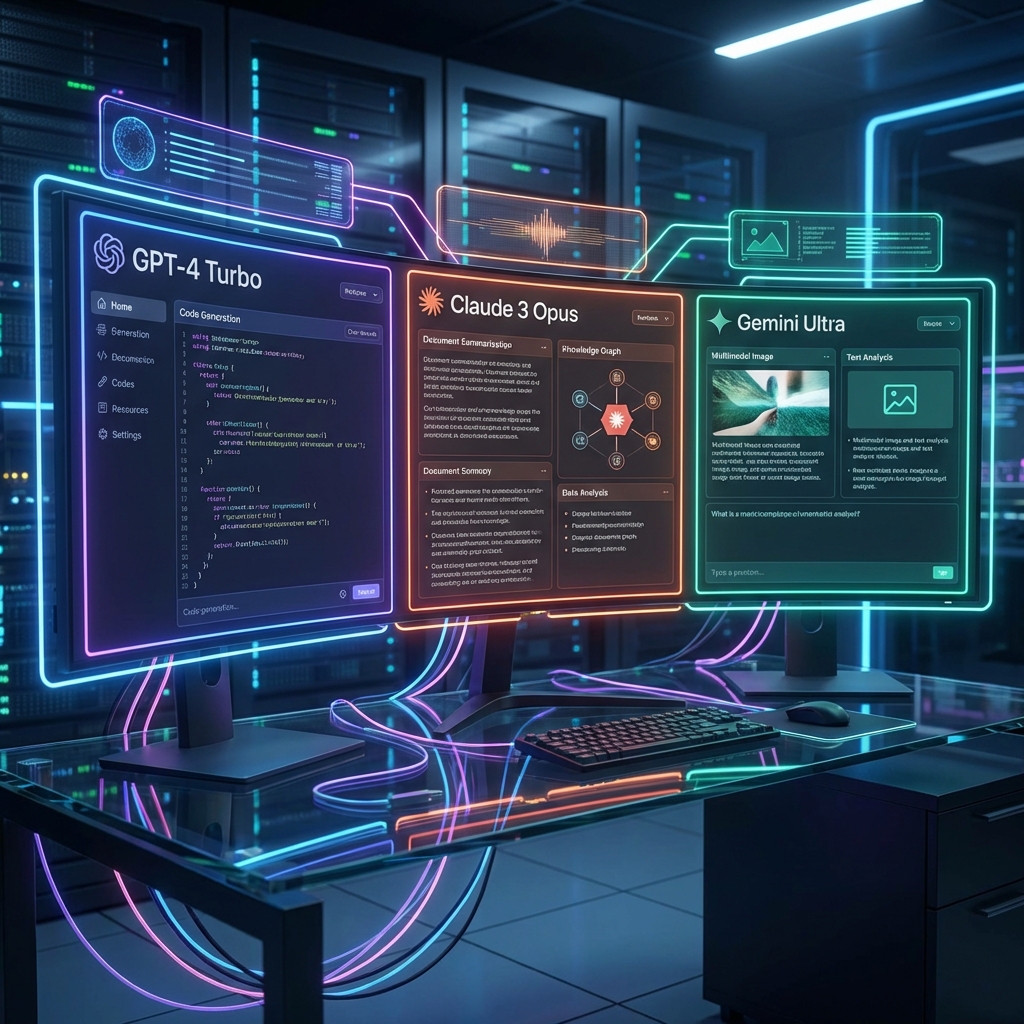

Use a tool like AI Playground to run your Golden Set against Model A and Model B simultaneously.

Step 3: Grade the Outputs

This is the hard part. You can use:

- LLM-as-a-Judge: Use a stronger model (like GPT-4) to grade the outputs of smaller models.

- Human Review: Manually inspect a random sample.

- Embedding Distance: Check for semantic similarity to a known ideal answer.

Tools of the Trade

You can build your own testing harness, or you can use existing tools. AI Playground offers built-in features for side-by-side comparison and quick iterative testing, making it an ideal starting point for A/B testing flows.

Continuous Optimization

AI models drift. A prompt that worked yesterday might fail today after a model update. Regular A/B testing ensures your product remains robust and reliable.